This tutorial explains how you can easily scrape Google Search results and save the listings in a Google Spreadsheet. It can be useful for monitoring the organic search rankings of your website in Google for particular search keywords vis-a-vis other competing websites. Or you can exporting search results in a spreadsheet for deeper analysis.

There are powerful command-line tools, curl and wget for example, that you can use to download Google search result pages. The HTML pages can then be parsed using Python’s Beautiful Soup library or the Simple HTML DOM parser of PHP but these methods are too technical and involve coding. The other issue is that Google is very likely to temporarily block your IP address should you send them a couple of automated scraping requests in quick succession.

Google Search Scraper using Google Spreadsheets

If you ever need to extract results data from Google search, there’s a free tool from Google itself that is perfect for the job. It’s called Google Docs and since it will be fetching Google search pages from within Google’s own network, the scraping requests are less likely to get blocked.

The idea is simple. We have a Google Sheet that will fetch and import Google search results using the ImportXML function. It then extracts the page titles and URLs using an XPath expression and then grabs the favicon images using Google’s own favicon converter.

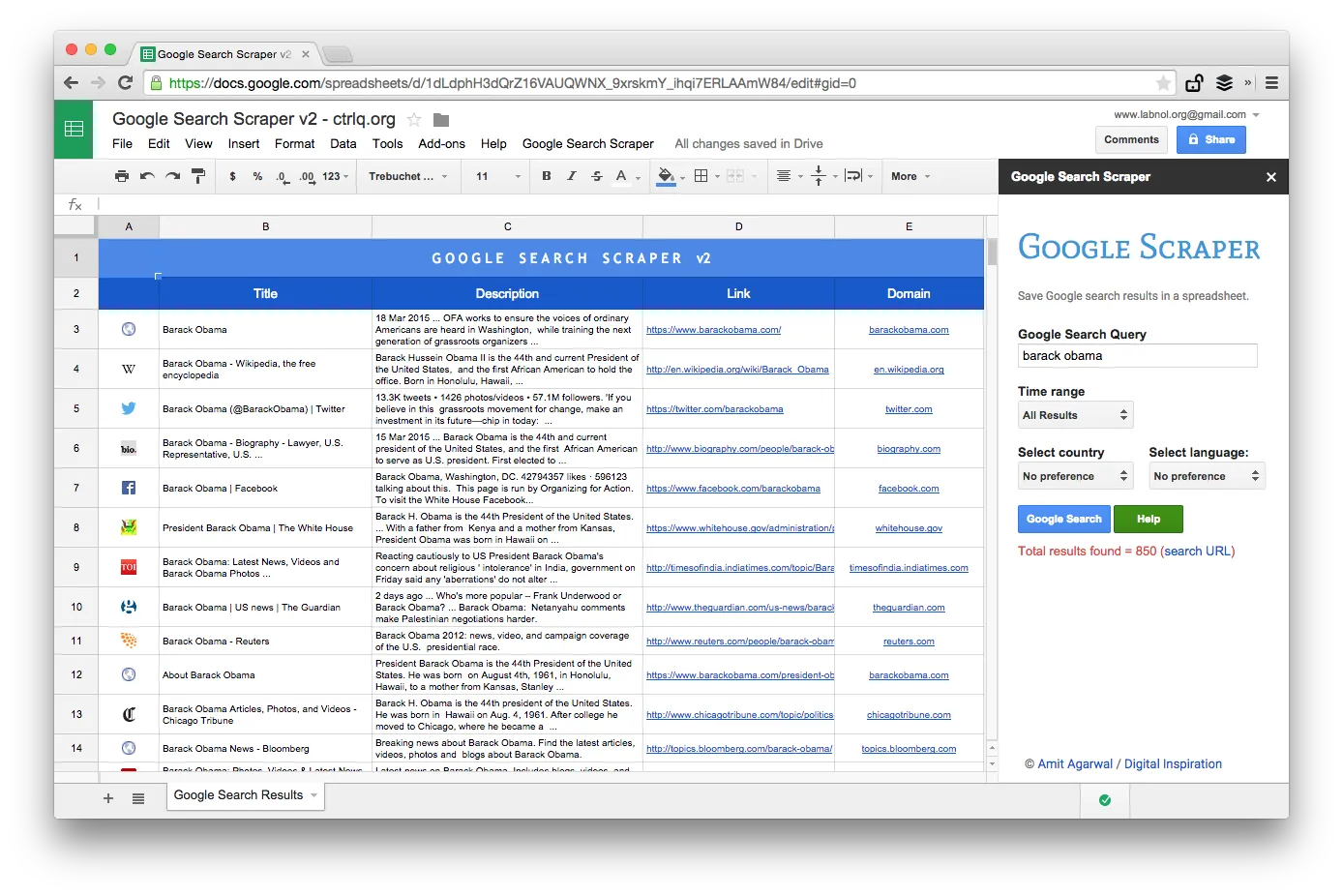

The search scraper is available in two editions - the free edition that only fetches the top ~20 results while the premium edition downloads the top 500-1000 search results for your search keywords while preserving the ranking order.

Features

Free

Premium

Maxiumum number of Google search results fetched per query

~20

~200-800

Details fetched from Google Search Results

Web page title, URL and website favicon

Web page title, search snippet (description), page URL, site’s domain and favicon

Perform time limited searches

No

Yes

Sort search results by date or by relevance

No

Yes

Limit Google Search results by language or region (country)

No

Yes

PDF Manual

None

Included

Support options

None

Choose your Google Search Scraper edition

[premium_gas premium=“MMWZUKU3WA2ZW” platinum=“9F4DE545U3MBW”]

Google Search inside Google Sheets

To get started, open this Google sheet and copy it to your Google Drive. Enter the search query in the yellow cell and it will instantly fetch the Google search results for your keywords.

And now that you have the Google Search results inside the sheet, you can export the Google Search results as a CSV file, publish the sheet as an HTML page (it will refresh automatically) or you can go a step further and write a Google Script that will send you the sheet as PDF daily.

Advanced Google Scraping with Google Sheets

This is a screenshot of the Premium edition. It fetches more number of search results, scrapes more information about the web pages and offers more sorting options. The search results can also be restricted to pages that were published in the last minute, hour, week, month or year.

Spreadsheet Functions for Scraping Web Pages

Writing a scraping tool with Google sheets is simple and involve a few formulas and built-in functions. Here’s how it was done:

- Construct the Google Search URL with the search query and sorting parameters. You can also use advanced Google search operators like site, inurl, around and others.

https://www.google.com/search?q=Edward+Snowden&num=10

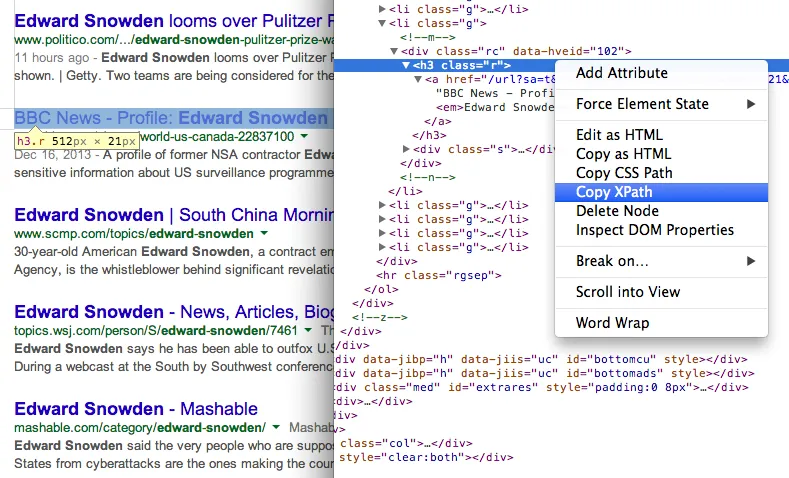

- Get the title of pages in search results using the XPath //h3 (in Google search results, all titles are served inside the H3 tag).

=IMPORTXML(STEP1, “//h3[@class=‘r’]”)

Find the XPath of any element using Chrome Dev Tools 7. Get the URL of pages in search results using another XPath expression

Find the XPath of any element using Chrome Dev Tools 7. Get the URL of pages in search results using another XPath expression

=IMPORTXML(STEP1, “//h3/a/@href”)

- All external URLs in Google Search results have tracking enabled and we’ll use Regular Expression to extract clean URLs.

=REGEXEXTRACT(STEP3, ”\/url\?q=(.+)&sa”)

- Now that we have the page URL, we can again use Regular Expression to extract the website domain from the URL.

=REGEXEXTRACT(STEP4, “https?:\/\/(.[^\/]+)”)

- And finally, we can use this website with Google’s S2 Favicon converter to show the favicon image of the website in the sheet. The 2nd parameter is set to 4 since we want the favicon images to fit in 16x16 pixels.

=IMAGE(CONCAT(“http://www.google.com/s2/favicons?domain=”, STEP5), 4, 16, 16)