RSS feeds have completely changed the way we consume information on the web. You no longer have to visit The New York Times or CNN every hour of the day to check news headlines because the feed reader is doing that for you behind the scenes.

The only problem is that not all web content is available via feeds. For instance, Amazon, eBay and Google Product Search (Froggle) are good places to find discount deals on books and electronic gadgets but unfortunately, none of these shopping sites publish feeds.

Monitor HTML Web Pages with Google Docs

Problem: Let’s say you are looking for some discount deals on iPod Nano. An option here is that you open the the Google Shopping page and search for iPod. If you don’t find the right price, repeat the same cycle next day. This may sound easy but imagine doing this for ten other products on five different shopping sites. Tedious, right?

Solution: What we can do here is build a simple spreadsheet in Google Docs that will monitor prices across all these search pages and will present them in a table so you don’t just track prices but also compare them at the same time.

To get started, you need access to Google Docs and some basic knowledge of XPath. Don’t let this scare you - XPath is a simple way to access information contained inside HTML web pages. For instance, if you want to know about all URLs that are mentioned on any web page, the XPath expression would be //a[@href]. Some more examples:

//strong means all the items in the web page with strong html tags

//@href means all the items in the web page with href element, i.e., the URLs in that page.

If you feel that writing XPath expressions is a tricky job, get the XPath checker add-on for Firefox that will help you easily determine the XPath of any element on a web page.

Scrap Web Pages with Google Docs using ImportXML and XPath

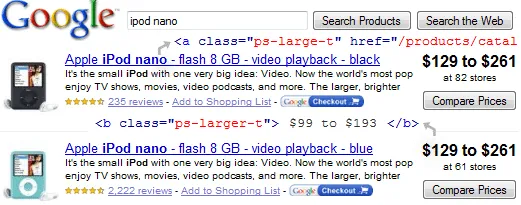

This is the search page for ‘ipod nano’ inside Google Products. As you may noticed already, the result title is formatted with CSS class ps-large-t while the the product price using the class ps-larger-t - you can easily find these class names via Firebug or from the HTML source.

Now we’ll create a table inside Google spreadsheet that will have the name, price and URL that will link to that product listing in Google Docs. You can use the same approach to get product data from other sites like Amazon, eBay, Buy.com, etc.

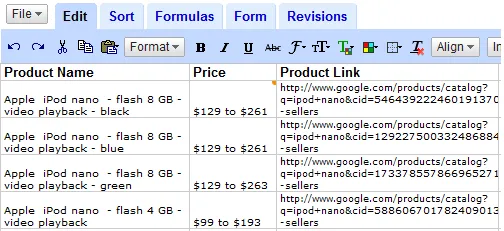

Here’s how the final spreadsheet looks like - all this is live data and will update automatically if the corresponding information is updated on Google Products.

Get External Data in Google Docs with ImportXML

As you may have seen in the previous tutorial on Google Docs, there’re built-in spreadsheet functions to help you easily import external data into Google Docs. One such useful function is ImportXML that, like ImportHTML, can be used for screen-scrapping.

The syntax is =ImportXML("web page URL", "XPath Expression")

Coming back to the spreadsheet, in order to fetch the price of ‘ipod nano’, we type the following formula:

=ImportXML("google.com/products?q=ipod+nano", "//b[@class='ps-larger-t']")

You may replace ‘ipod nano’ with any other product name like ‘harry+potter’, ‘nikon+d60’, etc.

To enter this function into Google Docs, click an empty cell, press F2 and paste. See this Google Docs movie:

Similarly, for the product name, we use this formula:

=ImportXML("www.google.com/products?q=ipod+nano", "//a[@class='ps-large-t']")

And for the URL (product hyperlink), the formula is:

=ImportXML("http://www.google.com/products?q=ipod+nano", "//a[@class='ps-large-t']//@href")

You need to concatenate this with http://www.google.com since Google Products uses relative URLs. This can be easily fixed by adding another column with the formula

=HYPERLINK("http://www.google.com/"&B3,"click here")

Related: Import data from HTML Web Pages into Excel

Subscribe to Web Page Changes via Feeds

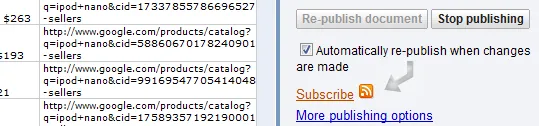

You don’t have to check this Google Docs Spreadsheet manually to see if prices have since yesterday - just select publish followed by “Automatically re-publish when changes are made” and subscribe to the document in your favorite RSS reader.

The author is an Excel whiz kid and blogs at Chandoo.org. This site is a a gold mine of tips related to data manipulation & visualization through Excel and other spreadsheet programs.