The XML Sitemap file is like a directory of all web pages that exist on your website or blog. Google, Bing and other search engines can use these sitemap files to discover pages on your site that their search bots may have otherwise missed during regular crawling.

The Problem with Blogger Sitemap Files

A complete XML sitemap file should mention all pages of a site but that’s not the case if your blog is hosted on the Blogger or blogspot platform.

Google accepts sitemaps in XML, RSS, or Atom formats. They recommend use both XML sitemaps and RSS/Atom feeds for optimal crawling.

The default atom RSS feed of any Blogger blog will have only the most recent blog posts – see example. That’s a limitation because some of your older blog pages, that are missing in the default XML sitemap file, may never get indexed in search engines. There’s however a simple solution to fix this problem.

Generate XML Sitemap for your Blogger Blog

This section is valid for both regular Blogger blogs (that have a blogspot.com address) and also the self-hosted Blogger blogs that use a custom domain (like postsecret.com).

Here’s what you need to do to expose your blog’s complete site structure to search engines with the help of an XML sitemap.

-

Open the Sitemap Generator and type the full address of your Blogger blog.

-

Click the Generate Sitemap button and this tool will instantly create the XML file with your sitemap. Copy the entire text to your clipboard.

-

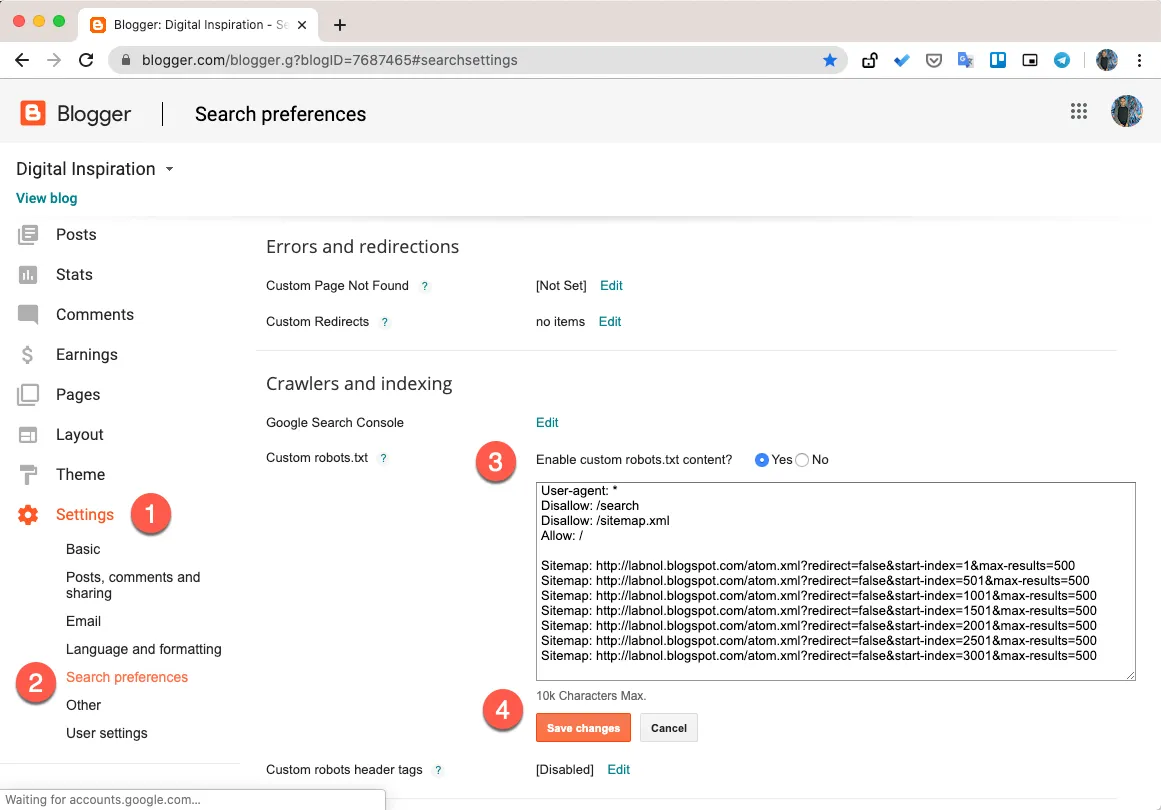

Next, go to your Blogger.com dashboard, navigate to Settings –> Search Preferences, enable Custom robots.txt option (available in the Crawling and Indexing section). Paste the XML sitemap here and save your changes.

And we are done. Search engines will automatically discover your XML sitemap files via the robots.txt file and you don’t have to ping them manually.

Internally, the XML sitemap generator counts all the blog posts that are available in your Blogger blog. It then splits the posts in batches of 500 posts each and generates multiple XML feed for each batch. Thus search engines will be able to discover every single post on your blog since it would be part of one of these XML sitemaps.

PS: If you have switched from Blogger to WordPress, it still makes sense to submit XML sitemaps of your old Blogspot blog as that will aid search engines discover your new WordPress blog posts and pages.