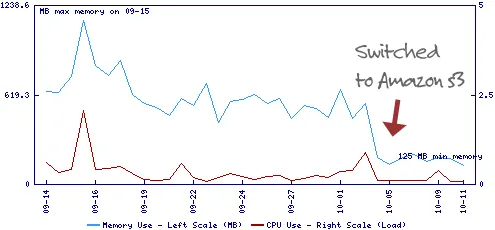

Last week, I moved all the common web images, CSS, JavaScript and other static files of this blog to Amazon S3 Storage service and that alone reduced the average CPU load / memory requirement of the web server by almost 90% – see graph.

Why Use Amazon S3 Storage for Hosting Files

There are multiple advantage of hosting images on Amazon S3 – your site’s downtime is reduced because there are lesser number of concurrent connections to your main web service (and hence lower memory requirements) and two, the site’s overall load-time is reduced because static images and other files are served via the more efficient content delivery network of Amazon

How to Host Images on Amazon S3 Storage

Let’s assume that you have an account at amazon.com (who doesn’t have one) and you want to use the sub-domain files.labnol.org for hosting images that in turn are stored on Amazon Simple Storage Service.

Step 1: Go to Amazon.com and sign-up for the S3 service. You may use the same account that you created for shopping on the main amazon.com portal.

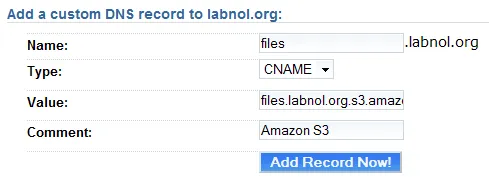

Create CNAME Record for Amazon S3

Create CNAME Record for Amazon S3

Step 2: Login to the control panel of your web hosting service and create a new CNAME record - we’ll set the name as files (same as sub-domain) and assign it a value of files.labnol.org.s3.amazonaws.com (for details check this article on Amazon S3 Buckets).

Step 3: Install S3 Fox – this is my favorite Amazon S3 client though it works inside Firefox. Check this S3 Guide for a list of other popular S3 clients.

Step 4: Now we’ll associate S3 Fox with our Amazon S3 account. First go here to access your secret Access Key ID. Then click the S3 Fox button in the Firefox status bar and complete the associate via “Manage Accounts.”

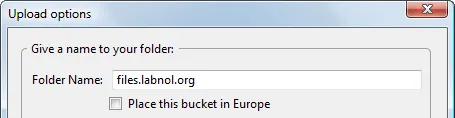

Create folders to host files

Create folders to host files

Step 5: In the “Remote View” tab of S3 Fox, create a new folder that has the same name as your sub-domain. Drag-n-drop all your images, static files and other folder from the desktop into this folder and they’ll automatically get uploaded to your Amazon S3 account.

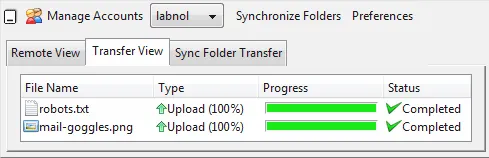

Amazon S3 Upload Queue

Amazon S3 Upload Queue

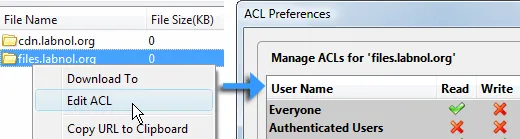

Step 6: This is important. By default all files uploaded on Amazon S3 are accessible only to the owner but since your are hosting web pages for a public website, anyone should be able to read these files.

Set File Permissions on Amazon S3

Set File Permissions on Amazon S3

To change the default permission, right-click the main folder files.labnol.org and choose “Edit ACL”. Now select “Read” for “Everyone” and “Apply to All Folders”.

Step 7: This is optional but if you don’t like your web pages to be crawled by Google and other spiders, you may create a robots.txt file and place it in root directory.

User-agent: * Disallow: /

This may be a good idea because Amazon S3 charges you for every byte of requested data so you may block web bots and thus reduce your overall bandwidth bills.

Is Amazon S3 More Expensive Than Your Web Host

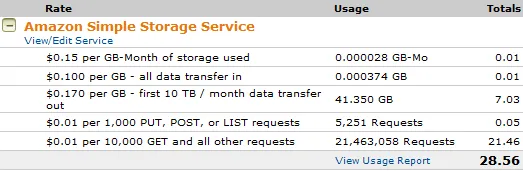

Here’s a detailed report of my Amazon S3 usage for one week. I’ll have to shell out around $28 per week or a little over $100 per month.

Itemized Bill - Amazon S3 storage

Itemized Bill - Amazon S3 storage

Now DreamHost Private Server hosting used to cost me around $150-200 a month but after moving the images to Amazon S3, that charge has reduced by around 60% so the total monthly cost of hosting website + images still remains the same.